With artificial intelligence reshaping industries, economies, and everyday life, a question arises: Will it transform technology too? Will it become self-sustained and operate by itself?

The AI models are growing in capability and intelligence. But this requires a huge computational appetite, and organizations are wondering what kind of computing hardware will power this future.

Neuromorphic computing is a radical rethinking of how computers operate. It is inspired by the human brain, the very organ that gave rise to human intelligence.

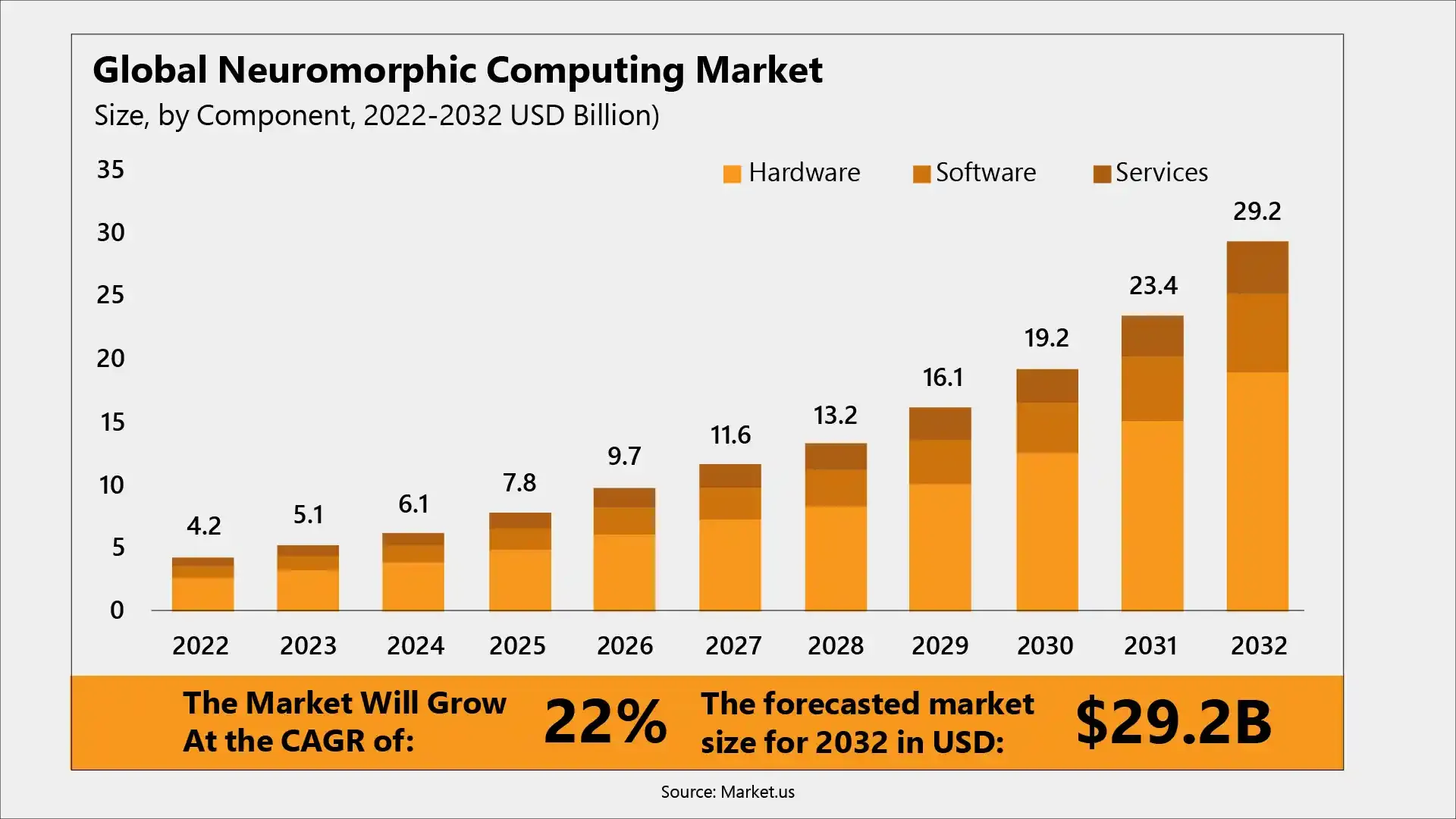

According to market.us, the neuromorphic computing market is expected to reach nearly $13.2 billion by 2028, up from $9.7 billion in 2026, exhibiting a CAGR of 22%.

Traditional processors like CPUs and GPUs execute instructions in a fixed sequence. But neuromorphic systems are different. They mimic the structure and operation of neural networks inspired by biological brains.

These systems focus on event-driven computing and spiking neural networks (SNNs) in which data flows through “spikes” of activity similar to electrical impulses travelling through neurons. This promises energy-efficient, real-time, and adaptive computing that was previously hard to attain.

How is Neuromorphic Computing Different?

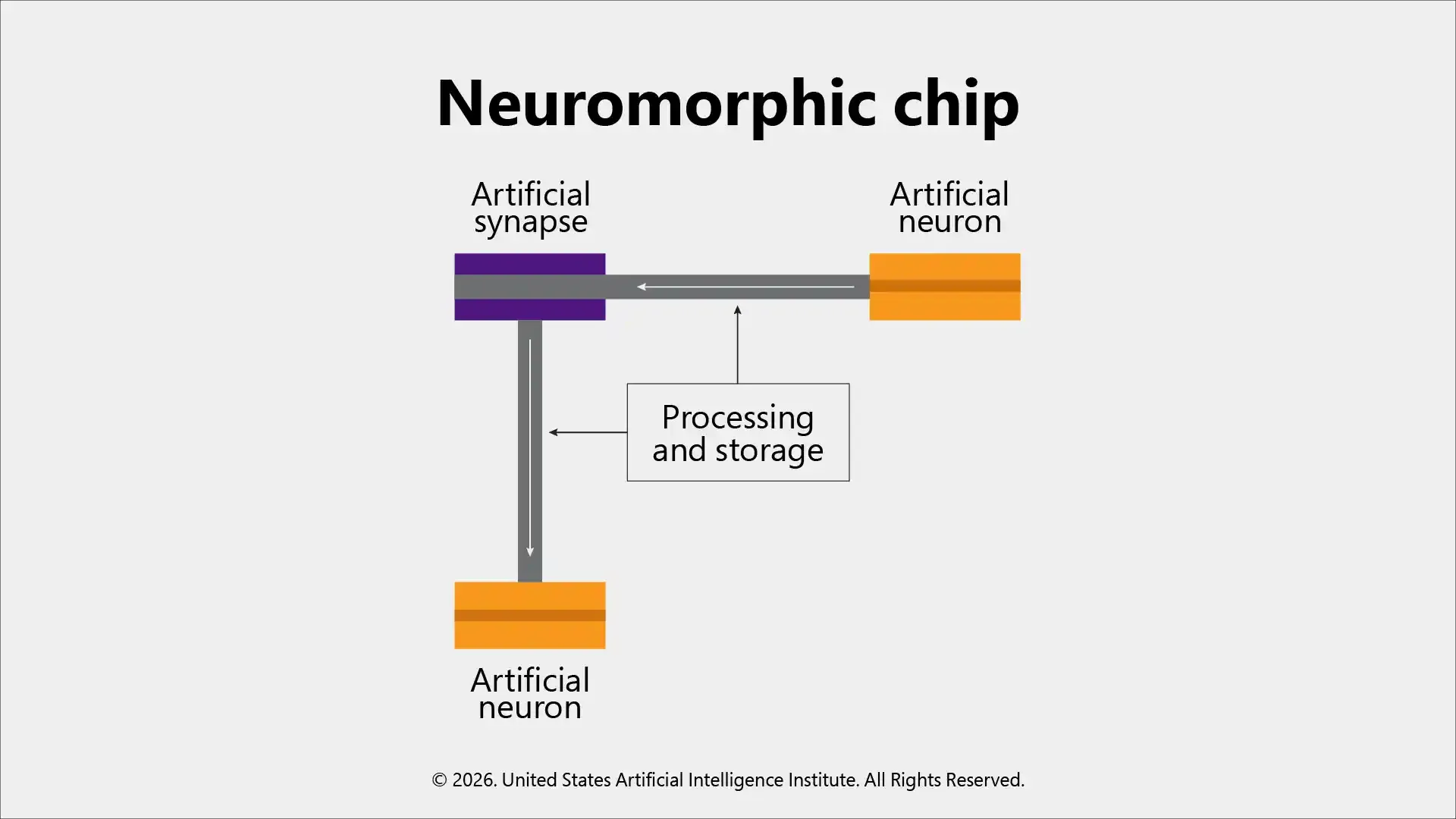

At the core of neuromorphic computing lies the hardware inspired by the human brain. It does not separate memory and processing (like the hallmark of von Neumann architectures); instead, it designs embedded memory alongside computation, similar to how biological synapses and neurons work. The diagram below shows different components of neuromorphic chip resembling how they function similar to human brains.

Machine learning algorithms must be redesigned to operate efficiently on spiking neural networks and event-driven neuromorphic hardware.

This leads to:

Here’s a simple example to understand this: neuromorphic chips can be embedded in small devices to process sensory data continuously, like always-on-cameras, robots, or speech recognition devices, with minimal battery drain. Neuromorphic systems are considered to be 100 times more energy efficient than traditional processors in some tasks.

The Rise of Neuromorphic Hardware: Traditional AI’s Hardware Bottleneck

The rapid growth of deep learning over the past few years was mostly powered by GPUs and TPUs, a specialized form of accelerators. Though these architectures were excellent in doing parallel mathematics, they came with certain challenges, such as:

An AI and ML Engineer working with neuromorphic systems needs to understand both model optimization and hardware-level constraints.

Neuromorphic hardware can easily handle these hardware constraints head-on. They emulate brain-like computation and therefore deliver high performance with very little power consumption. This opens doors to numerous possibilities for truly local intelligence on edge AI devices.

Not just hardware, even the AI and human work is evolving rapidly. The recent article The Rise of Artificial Intelligence and the Future of Human Work, discusses how AI is rising, and organizations need to focus on human-centric AI adoption.

Where does Neuromorphic Computing Shine Today?

Though neuromorphic computing is still at the development phase, several real-world use cases demonstrate its transformative potential. Here are a few examples.

Neuromorphic systems can process sensory input data with minimal latency and power consumption in quick-decision application areas like autonomous drones or wearable health monitors.

Neuromorphic chips can power adaptive prosthetics to continuous patient monitoring and enable responsive systems without needing heavy hardware. They can interpret physiological signals in real time and help with diagnostics.

Neuromorphic systems with spike-based encoding are now widely used for applications like anomaly detection, malware analysis, intrusion detection, etc., where energy efficiency and real-time response highly matter and improve resilience and privacy.

The adaptive neuromorphic processors could carefully monitor complex environments in fields like logistics or industrial control systems to optimize processes instantly, as well as maintain system stability under dynamic conditions.

As this field grows, specialized Machine Learning Certifications can help professionals build expertise in hardware-aware AI development.

Challenges to Neuromorphic Computing Adoption

Neuromorphic computing is a highly transformative and promising technology. However, huge roadblocks need to be crossed before it can replace mainstream hardware entirely. Some significant challenges include:

Since there are no clear standard benchmarks, architectures, and software interfaces, it is difficult to measure its performance and validate results.

Neuromorphic systems are quite difficult to design, as conventional computing models are based on the von Neumann architecture. Therefore, existing programming tools are not suitable for spiking neural networks.

Expertise over multiple disciplines, including neuroscience, AI, physics, and hardware engineering, is needed for this technology. Unfortunately, only a limited number of training resources and a small global expert community are available.

Most importantly, performance loss and lower accuracy can occur in converting traditional deep learning models into spiking neural networks as compared to conventional AI systems.

Is Neuromorphic Computing the Future of AI Hardware?

Well, it is not the right time to answer with a simple yes or no. But neuromorphic computing is undoubtedly an important element of future AI hardware.

The conventional CPUs and GPUs will always remain important for large-scale training, foundational models, and data center workloads. But for efficient, autonomous, and real-time edge AI, neuromorphic architectures will offer better performance.

This hardware is, therefore, meant to complement and not replace the mainstream systems. It fits into a hybrid AI computing ecosystem in which each of the architectures has its own roles to play according to its strengths.

Deployment trends highlight that edge devices accounted for nearly 60% of neuromorphic chip revenue, and this segment is projected to grow at over 50% CAGR through 2031, driven by AI at the edge (Source: Mordor Intelligence).

A Paradigm Shift in Motion

Neuromorphic computing represents a paradigm shift, from rigid instruction-based systems to more adaptive, brain-like computational architectures.

Of course, a lot of hurdles, including software, manufacturing, and integration challenges, need to be crossed for widespread adoption; the potential of this technology is highly promising, especially in revolutionizing edge AI, robotics, healthcare, and autonomous systems.

With the role of AI getting embedded deeply in our daily lives and rapid development in the real-world intelligence, neuromorphic computing will sooner or later become the foundation of next-generation AI hardware that will power smarter, faster, and more efficient intelligent systems working similarly to how the human brain does.

Follow us: